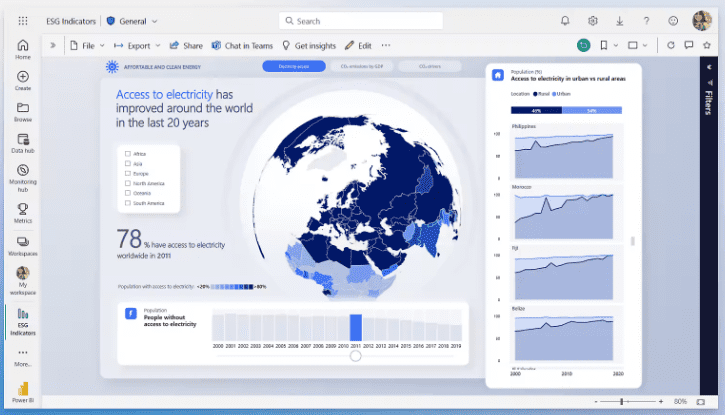

Choosing a dashboarding platform is a strategic move. You’re picking the front door to your data culture, the daily workspace for analysts, and the canvas executives will use to steer the ship. Power BI has been the default choice for a lot of teams over the last few years. The question on everyone’s roadmap now is simple. Will Power BI still be the right dashboarding tool for 2026?

What follows is a practical, honest look at where Power BI shines, where it struggles, and how it stacks up against the alternatives. I’ll break down the decision by use case, size of team, governance requirements, and how much you plan to lean on AI. The goal is clear guidance you can use during budget season and architecture reviews.

TL;DR summary for busy leaders

- Power BI remains a top pick for Microsoft-centric organizations that want unified governance, solid AI-assisted analytics, and tight integration with Office 365 and Azure

- It is an excellent fit for enterprise self-service BI at scale, especially if you plan to standardize on Microsoft Fabric

- Teams with heavy SQL-first modeling inside a modern data stack can still use Power BI effectively, though they should test performance with Direct Lake and semantic models early

- Design-forward, consumer-grade data apps still favor Tableau for some scenarios

- If your analytics strategy revolves around embedded analytics in a SaaS product, evaluate Power BI Embedded next to Looker and Qlik to compare licensing, caching, and developer ergonomics

- For 2026 roadmaps, the deciding factors will be governance, performance on near-real-time data, AI productivity, and TCO under Fabric

Why the 2026 timeline matters

Tooling cycles move fast, yet platform choices stick for years. By 2026, most data teams will have re-platformed at least part of their stack toward lakehouse patterns. Semantic layers will be table stakes. CIOs will ask how AI copilots reduce time to insight while staying compliant. If you lock into a dashboarding tool that cannot keep up with those expectations, switching later will be expensive.

The good news. Power BI has already been re-positioned inside Microsoft Fabric, which aims to unify data engineering, lakehouse storage, the semantic layer, and BI in one platform. That direction answers a lot of “what if” questions for 2026. The choice becomes less about a standalone dashboard tool and more about whether you want a single-vendor analytics cloud with BI as a core surface.

Strengths that make Power BI a safe 2026 bet

1) Tight integration across the Microsoft stack

If your company runs on Azure, Office 365, and Teams, the integration story is hard to beat. Single sign-on, Azure Active Directory security groups, and row-level security policies feel native. Sharing reports in Microsoft Teams reduces friction. Excel users can connect to the same semantic models and refresh their habits without extra training.

Why it matters in 2026. Distributed teams will keep using Teams for async updates and meeting recaps. Having KPIs show up where work already happens raises adoption and shortens feedback loops.

2) A unified data-to-dashboards path with Fabric

Fabric brings ingestion, transformation, lakehouse storage, notebooks, the semantic model, and Power BI under one roof. That simplifies ownership and governance. The bet is that fewer handoffs and fewer moving parts mean faster delivery and fewer “who owns this” debates.

Why it matters in 2026. Boards and auditors will keep pushing for clean lineage and consistent definitions. A single catalog and semantic layer reduces metric drift across departments.

3) AI-assisted productivity

Power BI’s Copilot and natural-language features help users build visuals, write DAX, summarize dashboards, and propose insights. AI in BI is no longer a novelty. The win is speed. Analysts spend less time translating requirements into visuals. Stakeholders get guided analysis instead of blank canvases.

Why it matters in 2026. As workloads grow, AI support becomes the force multiplier for small analytics teams. You want a tool that speeds up the 80 percent tasks without dumbing down the analysis.

4) Enterprise security and governance

Power BI offers mature governance patterns. Examples include sensitivity labels, information protection, row-level and object-level security, deployment pipelines, and tenant settings that control data export and external sharing.

Why it matters in 2026. Data privacy laws and internal policies will not relax. Security-first defaults make it easier for IT to sleep at night while still empowering self-service.

5) Price-to-value at scale

Licensing can be nuanced, yet for large Microsoft customers the overall TCO often compares favorably. There are predictable license tiers, plus Fabric capacity options for pooled compute. When paired with the rest of the Microsoft stack, procurement is simpler and vendor management is easier.

Why it matters in 2026. Budgets might tighten. Predictable costs help you plan growth without surprise overages.

Where teams hit friction with Power BI

Design polish and data storytelling

You can produce clean, executive-ready dashboards in Power BI. That said, some designers feel more freedom in Tableau for pixel-perfect layouts and nuanced storytelling. If you need highly bespoke visuals for customer-facing portals or public data journalism, you might favor a tool with more granular layout control.

DAX learning curve and semantic modeling

DAX is powerful. It also trips up newcomers. If your analysts mostly work in SQL and Python, you’ll need to invest in modeling fundamentals and DAX training. The move toward a centralized semantic layer helps, though the mental model still matters.

Performance on very large near-real-time data

Power BI has improved with DirectQuery, aggregations, and Direct Lake patterns inside Fabric. Still, teams pushing sub-minute freshness on massive datasets must prototype carefully. You want a performance plan, not wishful thinking.

Versioning and developer workflows

Deployment pipelines, tabular tooling, and Git integration have progressed. The experience is better than it used to be. Engineers coming from software development still ask for deeper test automation, environment parity, and code review patterns that feel native. Expect to invest in a documented workflow.

Power BI vs Tableau vs Looker vs Qlik in 2026

Use this table as a quick reference during vendor conversations. Treat it as directional guidance you can tailor to your stack.

| Criterion | Power BI | Tableau | Looker | Qlik |

| Core strength | Microsoft integration and Fabric unification | Visual design and storytelling | Modeled metrics and embedded analytics | Associative engine and in-memory speed |

| Semantic layer | Strong via Fabric and tabular models | Improving, less central than Power BI | Core to the product | Supports, different mental model |

| AI features | Copilot for report building and analysis | Growing AI helpers | Strong forecasting and LookML assist | Insight suggestions, associative exploration |

| Governance | Mature enterprise controls | Mature, strong enterprise support | Strong with governed metrics and roles | Mature, robust security |

| Embedded analytics | Power BI Embedded in Azure | Available via Tableau Embedded | Very strong developer APIs | Solid embedding options |

| Design flexibility | Good, can require add-ins for bespoke needs | Excellent for storytelling polish | Good, web-native look | Good with responsive designs |

| Cost structure | Favorable in Microsoft shops with Fabric capacity | Premium pricing tier for scale | License by user and capacity | Varies by deployment |

| Best fit | Microsoft-centric enterprise and self-service at scale | Design-forward analytics teams | Product teams embedding governed metrics | Mixed data sources with fast associative exploration |

Key decision factors for 2026

1) Your data architecture direction

- Planning a lakehouse strategy with a single vendor. Power BI with Fabric is a natural pairing

- Running a best-of-breed modern data stack with Snowflake or BigQuery and a neutral semantic layer. Test Power BI’s Direct Lake and semantic layer performance with your warehouse and caching patterns

- Expecting to standardize metrics centrally. Power BI works well if you lean into tabular models. If you already use a separate semantic layer, confirm contract and tooling overlap

2) Governance and compliance stance

- Strict control over data export. Power BI’s tenant settings, sensitivity labels, and RLS help you lock down risky actions while still allowing collaboration

- Lineage and catalog expectations. Fabric’s OneLake and shared models keep definitions consistent across business units

3) Analyst productivity

- If your analysts split time between SQL, Excel, and data storytelling, Power BI shortens handoffs

- If your team contains design specialists who obsess over custom visuals and layouts, put Tableau into a build-off and judge by stakeholder feedback

4) Embedded analytics needs

- Building analytics features into a customer-facing SaaS app. Compare Power BI Embedded with Looker and Qlik. Assess SDK ergonomics, pricing, and caching. Run an end-to-end prototype that covers SSO, multi-tenant isolation, white labeling, and performance

5) AI strategy

- If your executives want guided insights and natural-language questions in meetings, Power BI’s Copilot roadmap is relevant

- If you prefer AI outside the BI tool, ensure the BI platform exposes clean APIs and a stable semantic layer so LLM apps can reference governed metrics

What a good 2026 Power BI implementation looks like

1) A clear semantic model strategy

Treat the tabular model like code. Define owners, write standards for naming and folder structures, and set rules for measures. Avoid model bloat. Publish certified datasets that downstream teams can trust. Keep one place where a metric’s definition lives.

Checklist

- Naming conventions and measure templates

- RLS and OLS definitions stored with the model

- Source-of-truth catalog entry and documentation

- Scheduled refresh or Direct Lake plan documented

- Model size targets, aggregations plan, and performance budget

2) Tiered workspaces and promotion paths

Create workspaces for development, test, and production. Use deployment pipelines to move content predictably. Define who can approve promotions. Require Jira or Azure Boards tickets for promotions to production. This keeps auditors happy and reduces “surprise” changes.

3) Sensible self-service boundaries

Encourage power users to build personal reports on certified datasets. Restrict data source creation to a smaller data engineering group. Publish style guides and a gallery of reusable report templates. The goal is freedom within guardrails.

4) AI-ready content

Write measure descriptions and KPI definitions in plain language. Copilot works better when your metadata is clean. Add synopsis text to pages so AI can summarize with context. Make sure sensitive fields carry labels so AI features respect policy.

5) Performance engineering as a habit

- Use aggregations, composite models, and Direct Lake where appropriate

- Track query durations and memory usage in your capacity metrics

- Build a performance regression check before each major release

6) Training that sticks

Run short, targeted sessions for different audiences. Executives want navigation and KPI interpretation. Analysts need DAX patterns and performance tips. New hires get a one-page quick start. Short sessions beat marathon workshops.

Common pitfalls to avoid

- Overloading a single dataset with every measure for every team. Split by domain and publish certified datasets that map to clear ownership

- Ignoring version control for models and reports. Use Git or a structured pipeline so you can roll back safely

- Treating DirectQuery like a magic switch. Without aggregates and caching, you will frustrate users with slow visuals

- Building “pretty sprawl” where each department maintains its own definitions. Lock definitions in certified models and encourage reuse

- Skipping a design system. Create a theme and a set of approved visuals so reports feel consistent across the company

Real-world use cases that favor Power BI by 2026

Company-wide KPI portal

A conglomerate wants a single KPI hub for finance, supply chain, and sales. Executive assistants share pages in Teams. Finance controls certified measures. Country managers build local reports on the same datasets. Power BI’s governance and Teams integration make this smooth.

Embedded analytics for B2B clients

A software vendor embeds usage analytics inside their app. They need white-labeling, RLS by tenant, and predictable capacity pricing. Power BI Embedded delivers, especially if the rest of the platform runs on Azure.

Hybrid Excel and BI workflows

A field operations team still uses Excel for scenario planning. They connect to the same semantic model that feeds dashboards. Analysts maintain one set of definitions. Leadership reviews dashboards in Power BI while analysts run what-if models in Excel without CSV exports.

When an alternative might fit better

- Customer-facing data storytelling that needs meticulous, design-led polish. Tableau can give designers more control for that final 10 percent

- A product team that already standardized on a neutral semantic layer plus a separate visualization library for an app. Looker or a custom React-based front end can be a good match

- A discovery-driven analytics culture that leans on the associative model to surface relationships across many tables. Qlik’s engine is an advantage there

Cost and licensing questions to sort early

- Will you license by user, by capacity, or both. Map this to your headcount forecast and growth plans

- Which content types will sit behind capacity. Datasets, paginated reports, embedded workloads

- How will you manage external sharing with partners and suppliers. Align tenant settings with legal and procurement

- What is your plan for test environments. Budget capacity for performance testing before production rollouts

A short TCO model helps. Include licenses, capacity, developer time, support, and the cost of alternative tools you would have to purchase if you picked a different BI layer. When everything lives under one vendor, bundles can reduce spend.

Skills your team should invest in before 2026

- Data modeling with star schemas and a practical understanding of tabular engines

- DAX patterns for common business logic like year-over-year, rolling windows, and cohort analysis

- Performance tuning for Direct Lake and composite models

- Git-based workflows and CI checks for BI artifacts

- Visual communication basics. Layout, hierarchy, typography, and color for accessibility

- Governance fundamentals. Security roles, sensitivity labels, and approval processes

These skills pay off regardless of tool. If you go with Power BI, they unlock most of the platform’s value.

A practical evaluation plan

- Pick three representative use cases: Executive KPI dashboard, a real-time operations report, and a departmental self-service scenario. If you embed analytics, include a product use case.

- Build the same use cases in two tools: Power BI and your strongest alternative. Keep scope tight. Aim for a two-week sprint per tool with clear success criteria.

- Measure what matters

- Time to first useful dashboard

- Performance at expected concurrency and data volumes

- Ease of enforcing row-level security

- Developer workflow friction

- Stakeholder satisfaction and adoption intent

- Operating cost projection under real workloads

- Make a call grounded in your stack: If you are standardizing on Fabric and Azure, Power BI will likely score high. If you run a mixed stack, compare results carefully then decide based on governance and performance, not vendor momentum.

FAQs for 2026 buyers

Is Power BI good for a modern data stack with Snowflake or BigQuery

Yes, with the right model design. Use Direct Lake and composite models where they make sense. Test aggregations and caching on your largest tables before committing.

Will Power BI replace our separate semantic layer

It can if you want a single vendor solution. If your architecture prioritizes neutrality, keep your existing semantic layer and expose models to Power BI along with other tools.

How does Power BI handle governance in a regulated environment

It supports sensitivity labels, information protection, RLS, object-level security, tenant controls, and audit logs. Pair those with workspace promotion policies and you have a strong compliance posture.

Is Power BI the best for embedded analytics

It is a strong option. The right answer depends on your pricing model, developer stack, and scale. Run a prototype against your real authentication and tenancy model.

Do we need dedicated designers to make Power BI dashboards look great

You need a style guide, templates, and a few design basics. Power BI can look polished when you standardize themes and teach layout fundamentals.

Final verdict. Is Power BI the right dashboarding tool for 2026

For organizations that live in the Microsoft ecosystem or plan to adopt Fabric, Power BI is an excellent choice for 2026. It offers a unified path from data to dashboards, a solid semantic layer, serious governance, and AI features that save time. Teams that care deeply about design-led storytelling or that need a neutral semantic layer across a non-Microsoft stack should still run a head-to-head evaluation with Tableau or Looker, and bring Qlik into the conversation for discovery-heavy exploration.

If you want a pragmatic call. Pick Power BI when your roadmap leans toward Fabric, when self-service at enterprise scale is your main goal, and when you value one integrated platform. Choose an alternative when design polish or embedded analytics with specific developer requirements outweigh the benefits of a single vendor.

That is the lens that will still make sense when 2026 arrives.

Ben is a full-time data leadership professional and a part-time blogger.

When he’s not writing articles for Data Driven Daily, Ben is a Head of Data Strategy at a large financial institution.

He has over 14 years’ experience in Banking and Financial Services, during which he has led large data engineering and business intelligence teams, managed cloud migration programs, and spearheaded regulatory change initiatives.